Is Your Organisation AI-Ready or Just AI-Risky? Bridging the Sovereignty Gap

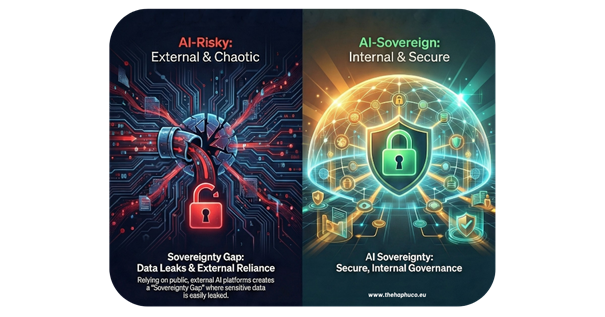

Your staff are currently handing your company’s ‘front door keys’ to strangers. They don’t mean to, but every time they paste a strategy into a public AI tool, your sovereignty leaks. Here is how to bridge that gap before it’s too late.

Imagine this: A senior executive, under pressure to meet a deadline, pastes a confidential strategy document into a public AI tool to “quickly summarise it”. It takes seconds. But the moment they hit enter, that proprietary data is gone—absorbed into a global dataset. Weeks later, a competitor’s query spits out your sensitive trade secrets as a “case study”. The information is public, the leak is irreversible, and the damage to your institution’s integrity is total.

Uploading sensitive data to an external AI tool takes seconds. The risk, however, lasts a lifetime.

The Hidden Cost of “External” Efficiency

In the rush to be “innovative”, many organisations are accidentally handing their “front door keys” to strangers. Relying on publicly available, external platforms for work-related tasks involving non-public data is effectively an information leak. These tools operate outside secure institutional perimeters, posing immediate risks of data breaches and non-compliance.

To protect institutional assets, you must pivot to secure, internal solutions that operate under strict standards for security and personal data protection.

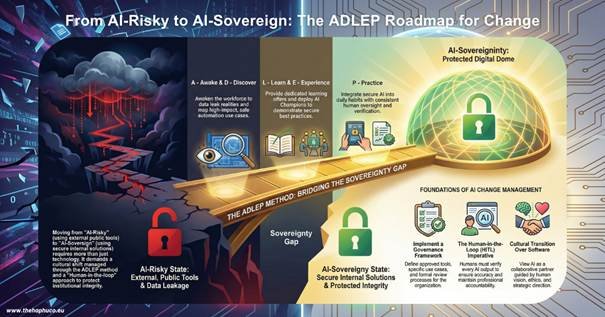

AI Change Management: The Bridge to Sovereignty

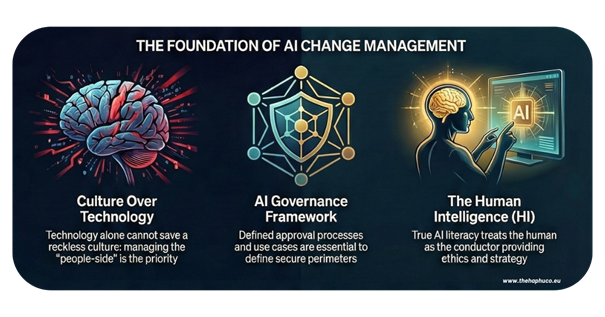

Technology alone won’t save a reckless culture. Implementing secure AI is a profound organisational transition that requires managing the “people-side” of the shift, alongside a formal AI Governance Framework to define approved tools, use cases, and review processes.

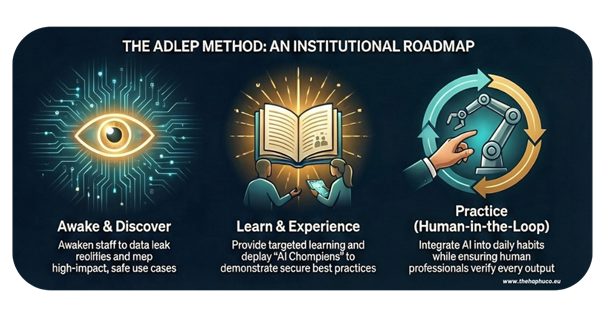

While frameworks like ADKAR focus on the individual, my ADLEP method serves as the institutional roadmap to bridge the “Sovereignty Gap”:

- A – Awake: You must “awaken” your workforce to the brutal reality of data leaks and the critical necessity of using internal tools.

- D – Discover: Map the high-impact use cases—such as document analysis or policy drafting—that can be safely automated.

- L – Learn: Provide a dedicated learning offer that teaches staff to navigate high-pressure digital shifts with clarity.

- E – Experience: Deploy “AI Champions” to share best practices and demonstrate the tangible power of secure AI in a safe environment.

- P – Practice: Turn AI use into a practised habit, integrating it into daily workflows with consistent human oversight.

Mastering the Ask: The TCREI Prompting Structure

In the world of Generative AI, your results are only as good as your instructions. To obtain the best of your internal AI, staff must move beyond basic queries and master the TCREI Prompting Structure, a five-step framework designed for high-quality professional output:

- TASK: Clearly define the specific action you want the AI to perform (e.g., “Draft a briefing note”).

- CONTEXT: Provide the background information or knowledge base needed to ground the response.

- REFERENCES: Supply the specific data points, documents, or styles the AI should refer to.

- EVALUATE: Review the initial response against your requirements for accuracy and tone.

- ITERATE: Refine the prompt based on the evaluation to polish the final result.

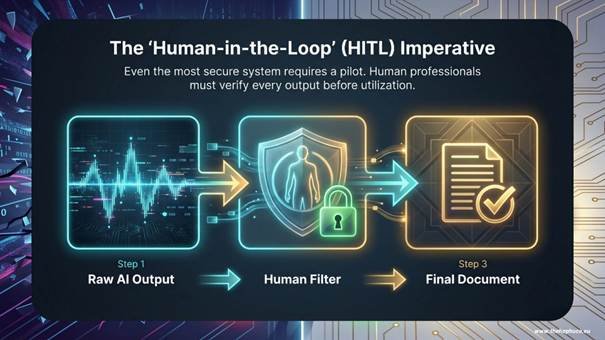

The ‘Human-in-the-Loop’ Imperative

Even the most secure system requires a pilot. A “Human-in-the-loop” (HITL) approach is not optional—it is a fundamental requirement for accountability. AI systems can produce biased or inaccurate results; human professionals must verify every output before anything is utilised or published.

The Mindset Shift: The Human Intelligence

The most significant barrier to AI scaling is not the software; it is the cultural transition. We must stop viewing AI with fear and start embracing it as a collaborative partner.

Crucially, we must recognise that behind every good AI, there is a brilliant Human Intelligence.

The technology is merely an instrument; the human is the intelligence who provides the vision, ethics, and strategic direction. True AI literacy flourishes when staff feel supported through targeted training, moving from passive users to empowered conductors of this new technology.

- Are you ready to stop being “AI-Risky” and start being “AI-Sovereign”?

- What steps is your organisation taking to protect its data while embracing innovation?

Share your thoughts below.

Alba Ridao-Bouloumié

Founder @TheHapHuCo | Transformational Coach | AI Strategist | Author

With over 22 years of experience in international leadership and change management, I help organisations navigate these complex digital shifts by blending human-centric coaching with AI-driven productivity.

#TheHapHuCo #CareerTransformation #PilotMindset #AlbaRidao #DurableSkills #2026LabourParadox #SustainableLeadership #AIStrategy

Access the full ADLEP® Framework at thehaphuco.eu or find your manual for freedom at amazon.com/author/albaridaobouloumie.

No responses yet